The Course Is Dying. What Replaces It Already Exists - And Institutions That Understand This Have a Structural Advantage.

Talent & Workforce Development

Editor's note

The course had a good run. For most of the twentieth century it was the right container: a defined period of time, a body of content, a test at the end, a credential on the way out. It organised learning into something administrable. That was not nothing. But Dr Philippa Hardman, Cambridge scholar and OpenAI education advisor, made a pointed observation in her recent newsletter: agentic AI tools have begun completing asynchronous online courses autonomously, clicking through modules, answering quizzes, and passing assessments without a human learner present. Institutions are scrambling to respond with security measures. Hardman's argument is that they are solving the wrong problem. If an AI can complete your course without anyone learning anything, the issue was never the AI. It was always the course.

Feature

Hardman's argument, developed across several pieces of research and practice, starts from a Salesforce study that is worth sitting with: employees who scored 90% or above on standard compliance course assessments demonstrated only 34% protocol adherence in actual workplace situations. The course measured recall. The workplace required application. These are not the same thing, and the gap between them is not a measurement failure. It is a design failure that has been tolerated for decades because it was convenient. A fixed time window, a content library, a quiz bank, and a certificate at the end is cheaper to produce than something that actually changes behaviour. And so it was produced, at industrial scale, and called professional development.

What is replacing the course is not another format. It is a different unit of learning entirely. The unit that actually produces durable capability is the iteration cycle embedded in real work: a problem encountered in context, a tool or framework applied to it, an output produced and evaluated against a real standard, and a reflection on what the result reveals. This is not new knowledge to anyone who has read Kolb or built a serious apprenticeship model. What is new is that AI can now mediate this cycle at scale, inside real institutional workflows, with enough contextual sensitivity to respond to what the learner is actually producing rather than what the content designer anticipated three months earlier. The forgetting curve, which Hardman cites as devastating to asynchronous learning - 60% of knowledge vanishes within 48 hours without application - is not a cognitive problem. It is a design problem. And its solution is not better spaced repetition software. It is learning that never leaves the context in which it will be used.

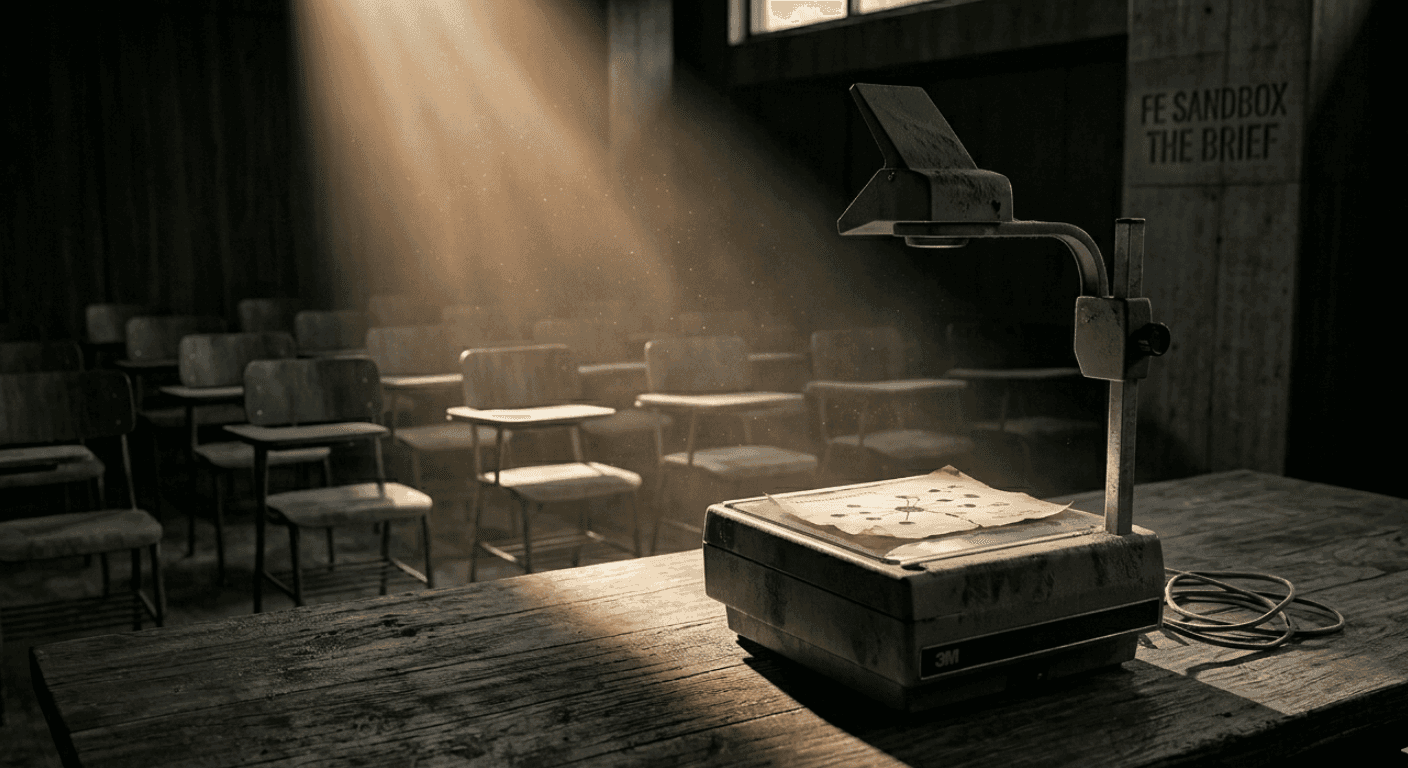

This is the structural insight behind the FE Sandbox. The Sandbox does not deliver content. It does not have modules in the traditional sense. It has sprints - compressed cycles anchored to a real institutional problem that the participant brings from their actual work. In the Surface phase, participants diagnose their own operational reality using analytical frameworks they did not previously have access to. What emerges is not a summary of what an expert thinks is wrong with the institution. It is the institution's own diagnosis, produced by the people who live inside it. That distinction matters because ownership of the diagnosis is the precondition for ownership of the solution. In the Build phase, participants apply tools and redesign processes inside a structured practice environment - with FE's AI mediator present not to deliver answers but to push reflection, demand specificity, and return every question to the participant's institutional context. The mediator is not a chatbot that helps you complete the course. It is a prompt that prevents you from moving forward until you have genuinely thought.

The WEF's Future of Jobs Report 2025 found that the skills rising fastest in workplace value - analytical thinking, adaptability, complex problem-solving - are precisely the skills that content-based courses measure least effectively. Coursera's 2026 learning trends data found that 96% of employers now say micro-credentials strengthen a candidate's application, and that 89% of micro-credential holders report building or strengthening soft skills in the process. The signal is clear: the market is moving toward demonstrated competency over time-in-training. The institutions that will lead in professional development are those that design for the former rather than defaulting to the latter.

The practical question for any HEI or EdTech company reading this is not whether to abandon the course format entirely. It is whether the learning experiences you design can answer one question that the course has never had to answer: how do you know the person operates differently afterward? The FE Sandbox was built around that question. The ninety-day Operate phase, with its three structured checkpoints, exists precisely because the Demo Day is not the end of the program. It is the end of the intensive phase. What comes after is the verification that something changed in the real operation, not the simulated one. If you cannot answer that question about your own professional development offer - if you have no mechanism to know whether your participants operate differently three months after the program closed - then you are still selling the course, whatever you choose to call it.

Thiago Chaer

Editor Chief and Founder of Future Education

Related Reads for You

Discover more articles that align with your interests and keep exploring.